Month 1 closed out with two major milestones: a working AWS deployment pipeline and a fully migrated database schema.

On the infrastructure side, the EKS cluster is live in us-east-1 with two t3.medium worker nodes. Traffic flows through an Application Load Balancer with SSL termination handled by AWS Certificate Manager, routing to four subdomains: the production and staging frontends and their corresponding API endpoints. DNS is managed through Route 53. GitHub Actions deploys automatically to the staging namespace on merge and holds for manual approval before touching production. The CI/CD connection uses OIDC federation through a dedicated IAM role, which means no long-lived AWS credentials are stored as secrets. To keep costs manageable during development, the cluster scales its worker nodes to zero when not in use and spins back up for demos and presentations.

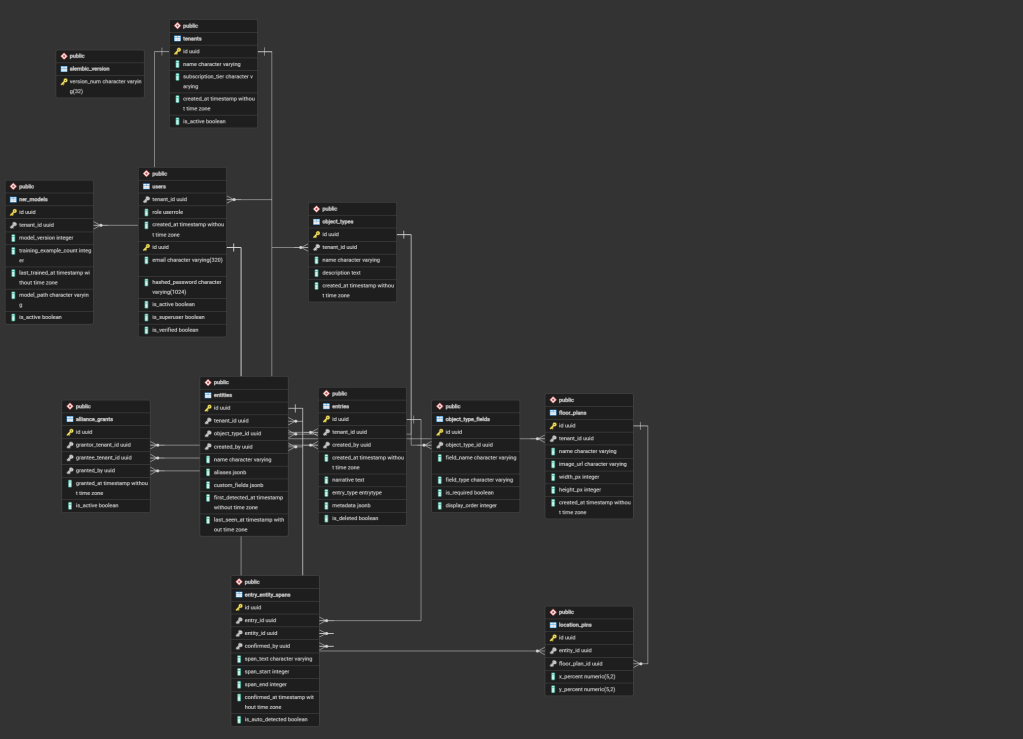

On the database side, all 12 tables are now live in PostgreSQL 18. The schema covers tenants, users, the object type system, the entity registry, entries, NER span records, floor plans, location pins, alliance grants, and NER model metadata. Alembic manages migrations, and alembic upgrade head applied the full schema cleanly to the live instance.

Two bugs were resolved along the way: a circular import in the auth module and a misplaced engine.dispose() call that was closing database connections mid-request instead of at application shutdown. A small schema refinement also landed, removing the title field from the Entry model to match the SDD spec.